The most common inputs used are file, beats, syslog, http, tcp, ssl (recommended), udp, stdin but you can ingest data from plenty of other sources. Using more than 50 input plugins for different platforms, databases and applications, Logstash can be defined to collect and process data from these sources and send them to other systems for storage and analysis. One of the things that makes Logstash so powerful is its ability to aggregate logs and events from various sources. We’ll start by reviewing the three main configuration sections in a Logstash configuration file, each responsible for different functions and using different Logstash plugins. While improvements have been made recently to managing and configuring pipelines, this can still be a challenge for beginners. Logstash configuration is one of the biggest obstacles users face when working with Logstash. Sudo apt-get install logstash Configuring Logstash Sudo tee -a /etc/apt//elastic-7.x.listĪll that’s left to do is to update your repositories and install Logstash: sudo apt-get update To install this package, use: echo "deb stable main" | It’s worth noting that there is another package containing only features available under the Apache 2.0 license. The next step is to add the repository definition to your system: echo "deb stable main" | sudo To install Java, use: sudo apt-get updateįirst, you need to add Elastic’s signing key so that the downloaded package can be verified (skip this step if you’ve already installed packages from Elastic): wget -qO - | sudo apt-key Check out other installation options here.īefore you install Logstash, make sure you have either Java 8 or Java 11 installed. We will be installing Logstash on an Ubuntu 16.04 machine running on AWS EC2 using apt. Installing Logstashĭepending on your operating system and your environment, there are various ways of installing Logstash. Beats configuring Logstash, eh?!Īnyways, this blog is about Logstash. The service includes parsing-as-a-service, which means our Customer engineers will just parse your logs for you. We manage and scale OpenSearch – the newly forked version of Elasticsearch, maintained by AWS – on our SaaS platform, so you don’t have to do it yourself. If you’re struggling with Logstash or the ELK data pipeline more generally, check out Logz.io Log Management to centralize your logs with out-of-the-box log ingestion, processing, storage, and analysis. If you’re here to evaluate Logstash, we typically recommend other options like Fluentd or FluentBit – which are lightweight log collectors that can handle most Logstash log processing capabilities, without the heavy computing footprint or propensity for breaking.

If you’re here because you want to get the most out of your existing Logstash installation, please read on! Key Observability Scaling Requirements for Your Next Game Launch: Part Iĭespite its popularity, Logstash has some serious shortcomings – chief among them being its huge computing footprint and tendency to break.SRE Revisited: SLO in the age of Microservices.Alerting, Visualizations, Dashboarding for Kubernetes.I understand that when enabling the modules it is not necessary to include the path of the logs in the inputs of filebeat.ymlīut if I am using a different module (system, mysql, postgres, apache, nginx, etc.) to send records to logstash using filebeat: how do I insert custom fields or tags in the same way I would in filebeat.yml when I configure? The entries in the path of the records? Since each module handles its own configuration by default where it indicates that it even indicates the path of the log files.įor this, I need to somehow conditionally detect the registry (apache, system, mysql, access.log, error.log, ip / hostname, application) that I am accessing to insert custom fields that I can use to filter later in kibana.

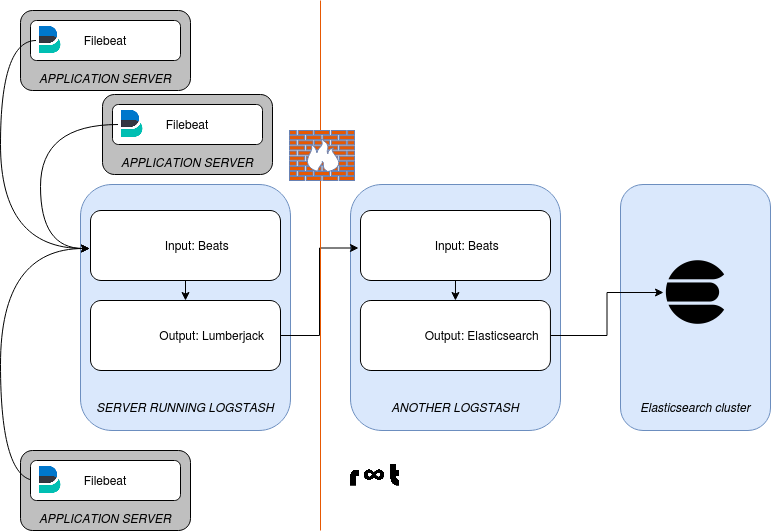

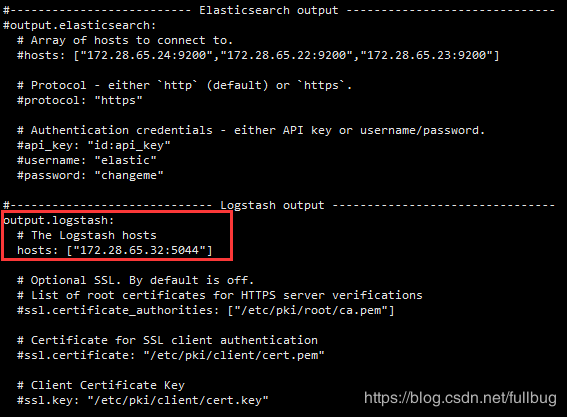

In the case of accessing the application server in glassfish, it created an input that includes the configuration: path, fields, tags from /etc/filebeat/filebeat.yml and it works. How do I make according to what type of log or log source I want to add or custom fields or tags as metadata to then filter with kibana? Considering that each module handles a path configuration to the log files. I am using different filebeat modules to send the logs. I am using the latest version of ELK Stack and I have Filebeat installed on different servers.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed